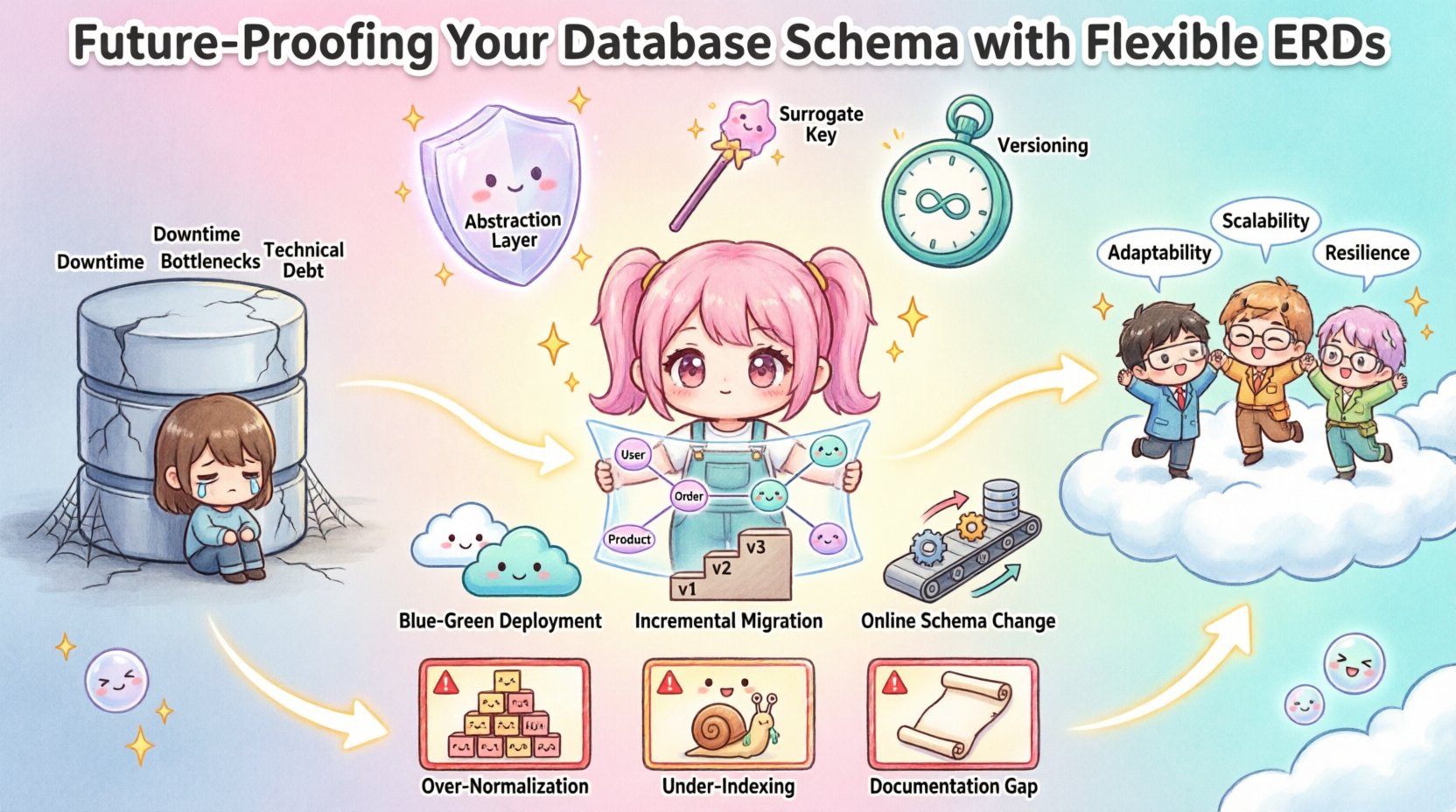

In the landscape of modern data architecture, the rigidity of traditional data models often clashes with the velocity of business requirements. As organizations scale, their data structures must evolve alongside them without causing catastrophic downtime or immense technical debt. This is where the concept of future-proofing your database schema comes into play. By leveraging flexible Entity Relationship Diagrams (ERDs), architects can design systems that adapt to change rather than resisting it. This approach prioritizes longevity, maintainability, and scalability over immediate optimization.

Designing a database is not merely about defining tables and columns; it is about anticipating the trajectory of information flow. A well-crafted ERD serves as the blueprint for this trajectory. When flexibility is embedded into the design phase, subsequent migrations become routine adjustments rather than disruptive overhauls. This article explores the methodologies required to build resilient data models that withstand the test of time.

Understanding Flexible Entity Relationship Diagrams 📐

A standard ERD maps out the relationships between entities, attributes, and keys. However, a flexible ERD goes beyond static mapping. It incorporates patterns that allow for schema evolution. This involves designing relationships that can accommodate new data types without requiring structural refactoring.

- Decoupling Metadata: Separating structural definitions from data values allows for dynamic attribute handling.

- Generic Relationship Tables: Utilizing polymorphic associations where specific business rules might change over time.

- Extensible Attribute Sets: Designing columns or tables that can store varying data structures without breaking normalization rules.

When you view the ERD as a living document rather than a final contract, the design philosophy shifts. The goal is to minimize the friction between the physical storage layer and the logical application layer. This separation ensures that changes in one do not inevitably break the other.

The Cost of Schema Rigidity ⚠️

Many organizations operate under the assumption that requirements will remain stable. History shows this is rarely the case. When a schema is rigid, any modification requires a migration process that locks tables, halts services, or risks data integrity. The consequences of ignoring flexibility include:

- Extended Downtime: Altering core structures in a high-availability environment is complex and risky.

- Application Bottlenecks: Developers spend more time fixing database errors than building features.

- Technical Debt: Temporary workarounds become permanent fixtures, degrading performance over time.

- Integration Friction: New systems struggle to connect with legacy data structures that are incompatible.

By acknowledging these risks early, teams can invest in a schema design that absorbs change. The initial effort to design flexibility pays dividends during the maintenance phase.

Core Principles of Flexible Design 🛠️

To achieve a robust schema, several foundational principles must guide the design process. These principles ensure that the database can grow without becoming unmanageable.

1. Abstraction Layers

Introduce abstraction between the application logic and the physical storage. This allows the underlying schema to change while the application interface remains consistent. Using views or intermediate tables can shield the application from direct table modifications.

2. Surrogate Keys

Replace natural keys with surrogate keys (artificial identifiers). Natural keys often change based on business logic or external factors. Surrogate keys provide a stable anchor for relationships, ensuring that foreign key constraints remain valid even when the underlying data changes.

3. Versioning

Implement versioning strategies for your data models. Just as code is versioned, data structures should track changes. This allows for rollback capabilities and ensures that older data can be interpreted correctly by newer versions of the application.

Strategies for Schema Evolution 🔄

Evolution is inevitable. The following strategies provide a framework for managing changes without disrupting operations. Each strategy addresses different scenarios regarding data volume and availability requirements.

Expandable Column Structures

Instead of creating a new column for every new attribute, consider using a flexible storage mechanism. While this requires careful indexing strategies, it allows for storing varying data types within a single field. This approach is particularly useful for user-generated content or feature flags that differ per user.

Shadow Tables

When a major structural change is needed, create a shadow table with the new structure. Begin writing data to both the old and new tables simultaneously. Once the data is validated and the application logic is updated to read from the new table, the old table can be archived. This minimizes risk significantly.

Backward Compatibility

Always design changes to be backward compatible. If a column is deprecated, do not remove it immediately. Mark it as deprecated and allow existing queries to function until the migration is complete. This prevents application errors during the transition window.

Migration Pathways and Execution 🚀

Moving data from one schema state to another is a critical operation. A flexible design simplifies this process. The table below outlines common migration strategies and their trade-offs.

| Strategy | Best Use Case | Risk Level |

|---|---|---|

| Online Schema Change | Large tables, minimal downtime | Medium |

| Blue-Green Deployment | Complete environment swap | Low |

| Incremental Migration | Gradual data transfer | Low |

| Immediate Alter | Small tables, low traffic | High |

Choosing the right pathway depends on the volume of data and the tolerance for latency. A flexible ERD reduces the complexity of the migration itself by ensuring that the structural changes are additive rather than destructive.

Common Pitfalls to Avoid 🚫

Even with a flexible mindset, certain errors can undermine the design. Being aware of these pitfalls helps maintain integrity.

- Over-Normalization: Splitting data too finely can lead to performance issues when joining tables. Flexibility does not mean abandoning normalization entirely.

- Under-Indexing: Flexible columns often contain sparse data. Failing to index these columns properly can slow down queries significantly.

- Ignoring Data Types: Storing everything as strings might seem flexible but makes validation and sorting difficult. Use appropriate types even within flexible structures.

- Lack of Documentation: A flexible schema is harder to understand. Comprehensive documentation is essential to prevent knowledge loss.

Monitoring and Maintenance 📊

Once the schema is deployed, the work continues. Monitoring tools should track schema drift, which occurs when the actual database structure deviates from the documented ERD. Automated alerts can notify teams of unintended changes.

Regular audits are also necessary to clean up deprecated fields. As business needs shift, unused columns accumulate. Pruning these elements keeps the schema lean and performant. This process should be part of the regular development lifecycle, not a one-time event.

Conclusion on Long-Term Viability 🔗

Building a database that lasts requires foresight. By integrating flexibility into the Entity Relationship Diagram from the start, teams can navigate the complexities of data growth with confidence. The strategies outlined above provide a roadmap for creating systems that do not just survive change, but thrive within it.

The investment in a robust design pays off in reduced maintenance costs and faster feature delivery. As the data landscape continues to shift, the ability to adapt quickly will define the success of any technical infrastructure. Focus on the patterns, not just the tools, to ensure your data foundation remains solid.