Every robust data system begins with a solid foundation. When designing a relational database, the Entity Relationship Diagram (ERD) serves as the blueprint for how information connects, flows, and persists. However, a diagram that looks clean on paper often hides performance traps in the execution environment. Identifying these hidden bottlenecks is critical for maintaining system health, ensuring query speed, and preventing data integrity issues as your application scales.

Many teams focus on building features without auditing the underlying schema structure. This oversight leads to slow response times, difficult maintenance cycles, and unpredictable behavior under load. By conducting a thorough review of your current ERD, you can pinpoint structural weaknesses before they impact users. This guide outlines the specific areas where inefficiencies typically hide and provides a methodical approach to optimizing your database architecture.

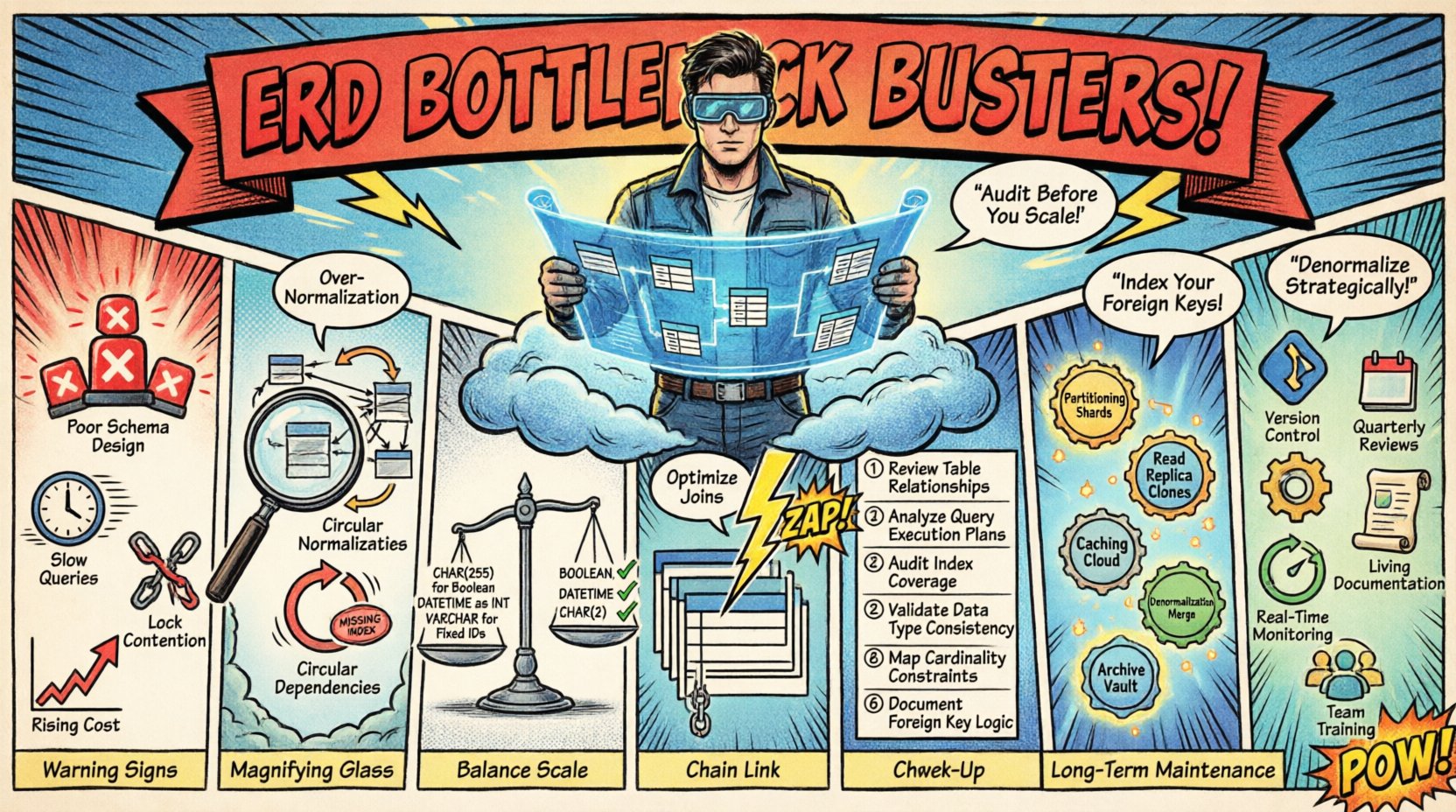

The Cost of Poor Schema Design 📉

When an ERD is not optimized for performance, the consequences ripple through the entire stack. Application servers spend excessive time waiting for database locks, network latency increases due to large data transfers, and storage costs rise unnecessarily. It is not merely about writing a few efficient queries; it is about ensuring the structure itself supports the workload.

- Query Latency: Complex joins across poorly indexed tables increase execution time significantly.

- Write Performance: Excessive foreign key constraints can slow down insert and update operations.

- Data Integrity: Ambiguous relationships lead to orphaned records and inconsistent data states.

- Scalability Limits: A rigid schema structure may prevent horizontal scaling or partitioning strategies.

Understanding these costs helps prioritize which parts of the diagram require immediate attention. The goal is not perfection on the first attempt, but rather a structured approach to continuous improvement.

Structural Inefficiencies to Watch For 🔍

There are specific patterns within an ERD that frequently signal underlying performance issues. These structural anomalies often stem from a lack of foresight during the initial design phase. Reviewing your diagram for the following signs can reveal where optimization is needed.

1. Over-Normalization

While normalization reduces redundancy, taking it too far creates a web of tables that are difficult to query efficiently. When a single logical entity is split across too many tables, every read operation requires multiple joins.

- Identify tables that contain only a single column or few rows.

- Check if these tables are joined in every query accessing the parent entity.

- Consider denormalizing specific columns to reduce join complexity for high-frequency reads.

2. Circular Dependencies

Tables that reference each other in a circular manner can cause deadlocks or infinite recursion during traversal. This structure makes it difficult to import or migrate data reliably.

- Map out the dependency chain for every table.

- Ensure there are clear entry and exit points for data flow.

- Resolve bidirectional relationships where one-way references suffice.

3. Missing or Redundant Indexes

An ERD often defines logical relationships, but it does not explicitly state where indexes exist. However, you can infer where indexes are needed based on foreign keys and frequent join columns.

- Look for foreign keys that lack corresponding indexes on the child table.

- Identify columns used in WHERE clauses that are not indexed.

- Check for redundant indexes that consume space but offer no unique access paths.

Data Type and Cardinality Misalignments ⚖️

The way data is defined within your tables directly impacts storage efficiency and query speed. Choosing the wrong data type or misinterpreting cardinality can lead to wasted resources and slow comparisons.

Cardinality Errors

Cardinality defines the relationship between entities (one-to-one, one-to-many, many-to-many). Mislabeling these relationships forces the database engine to enforce constraints that do not reflect business logic.

- One-to-Many: Ensure the foreign key exists on the “many” side.

- Many-to-Many: Verify that the junction table exists and contains unique composite keys.

- Optional vs. Required: Ensure NULL constraints match the actual business rules to avoid unnecessary checks.

Data Type Efficiency

Using a generic type like VARCHAR for everything might seem flexible, but it consumes more space and slows down comparisons. Fixed-length types and numeric types are generally faster.

| Attribute Type | Recommended Data Type | Reason |

|---|---|---|

| Boolean Flag | BOOLEAN or TINYINT | Saves space compared to string or larger integers |

| Date/Time | DATETIME or TIMESTAMP | Optimized for range queries and sorting |

| Short Codes | CHAR (Fixed Length) | Faster comparison than variable-length strings |

| Large Text | TEXT or CLOB | Prevents blocking of shorter records |

| Unique Identifiers | BIGINT or UUID | Ensures uniqueness and proper indexing |

Relationship Complexity and Join Performance 🔗

As data grows, the number of joins required to retrieve a single record often increases. Complex relationship graphs can lead to query execution plans that scan large portions of the disk. Analyzing the connectivity of your diagram helps identify high-cost paths.

- Deep Nesting: If you must join five or more tables to get basic information, consider restructuring.

- Join Order: The database engine determines the order, but the schema structure limits its choices.

- Self-Joins: Tables that join to themselves (e.g., for hierarchy) require careful indexing on the parent key.

- Large Joins: Avoid joining massive tables without filtering conditions first.

When joins become too frequent, it often indicates that the data model is too normalized for the current access patterns. In such cases, creating materialized views or adding redundant columns can reduce the need for runtime joins.

A Step-by-Step Schema Audit Process 📋

Optimizing an ERD requires a systematic approach. You cannot fix everything at once. Follow this workflow to identify and address bottlenecks effectively.

- Inventory the Schema: List all tables, columns, and relationships. Document the intended purpose of each entity.

- Analyze Query Patterns: Review the most frequently executed queries. Identify which tables and columns are accessed most often.

- Check Cardinality: Verify that every foreign key accurately reflects the relationship logic.

- Review Indexing: Ensure primary keys are indexed and foreign keys have supporting indexes.

- Test Constraints: Verify that checks and triggers do not introduce unnecessary overhead.

- Refactor: Apply changes iteratively, testing performance after each modification.

Remediation Techniques for High Traffic ⚡

Once bottlenecks are identified, specific techniques can be applied to improve throughput. These strategies depend on the nature of the data and the usage patterns.

- Partitioning: Split large tables into smaller, manageable chunks based on date or region to improve query scope.

- Read Replicas: Direct read-heavy traffic to secondary databases to reduce load on the primary.

- Caching: Store frequently accessed data in memory to bypass database lookups for static information.

- Denormalization: Duplicate data intentionally to reduce the need for joins in high-frequency reports.

- Archiving: Move historical data to cold storage to keep the active schema lean.

Long-Term Maintenance Strategies 🔄

Schema optimization is not a one-time task. Data needs change, and usage patterns evolve. Establishing a culture of maintenance ensures your ERD remains efficient over time.

- Version Control: Treat schema changes as code. Store migration scripts in your repository.

- Regular Reviews: Schedule quarterly audits to check for new bottlenecks.

- Documentation: Keep the ERD documentation up to date with every deployment.

- Monitoring: Set up alerts for slow queries or high lock contention.

- Team Training: Ensure developers understand the implications of their design choices on the overall system.

By maintaining vigilance over your Entity Relationship Diagram, you ensure that the database continues to serve as a reliable asset rather than a liability. Focus on the structure, validate the relationships, and keep the data types appropriate for the workload. This disciplined approach leads to a stable, scalable, and performant system without relying on shortcuts or hype.

Remember that the best design is the one that adapts to change without breaking. Regularly revisit your models, test them against real data, and adjust based on actual performance metrics rather than theoretical assumptions.