Database architecture is rarely static. As applications grow and requirements shift, the underlying data structures must adapt. This process is known as schema evolution. However, introducing changes to a production database carries significant risks. A single incorrect constraint or dropped column can halt application functionality or corrupt critical data. To mitigate these risks, engineers rely on a robust validation strategy grounded in Entity Relationship Models (ERMs). 🛡️

Validating schema evolution before deployment ensures that logical changes align with physical constraints. It bridges the gap between design intent and runtime reality. By utilizing ER models as the source of truth, teams can simulate changes, check dependencies, and verify compatibility without touching live data. This approach reduces downtime and prevents the chaos often associated with manual migration scripts.

Why Schema Evolution Matters 📉

In modern development cycles, data is the backbone of every feature. When a business requirement changes, the database often needs to reflect that shift. This could mean adding a new field, splitting a table, or altering a data type. Without a structured validation process, these changes become a gamble.

Common challenges during evolution include:

- Breaking Changes: Removing a column that applications depend on immediately causes errors.

- Performance Degradation: Adding indexes or changing storage engines can slow down queries unexpectedly.

- Data Integrity Loss: Poorly defined constraints can allow invalid data to enter the system.

- Downtime: Locking tables during migration can make the application unavailable to users.

Using an ER model allows architects to visualize these risks before they happen. The model serves as a blueprint, showing relationships, cardinality, and constraints clearly. 📐

The Role of ER Models in Validation 🧩

An Entity Relationship Model represents the logical structure of a database. It defines entities (tables), attributes (columns), and relationships (foreign keys). When validating evolution, the ER model acts as the baseline for comparison.

Here is how the model aids in validation:

- Dependency Mapping: It shows which tables rely on others. If a parent table changes, the child table must be checked.

- Constraint Verification: Primary keys and unique constraints are visible at a glance, ensuring they are not violated during updates.

- Normalization Checks: It helps verify that new structures still adhere to normalization rules, preventing redundancy.

- Historical Context: Comparing the current ER diagram with the proposed one highlights exactly what has changed.

By treating the ER diagram as a version-controlled artifact, teams can track evolution over time. This creates an audit trail for why specific schema decisions were made.

Identifying Change Types 🔍

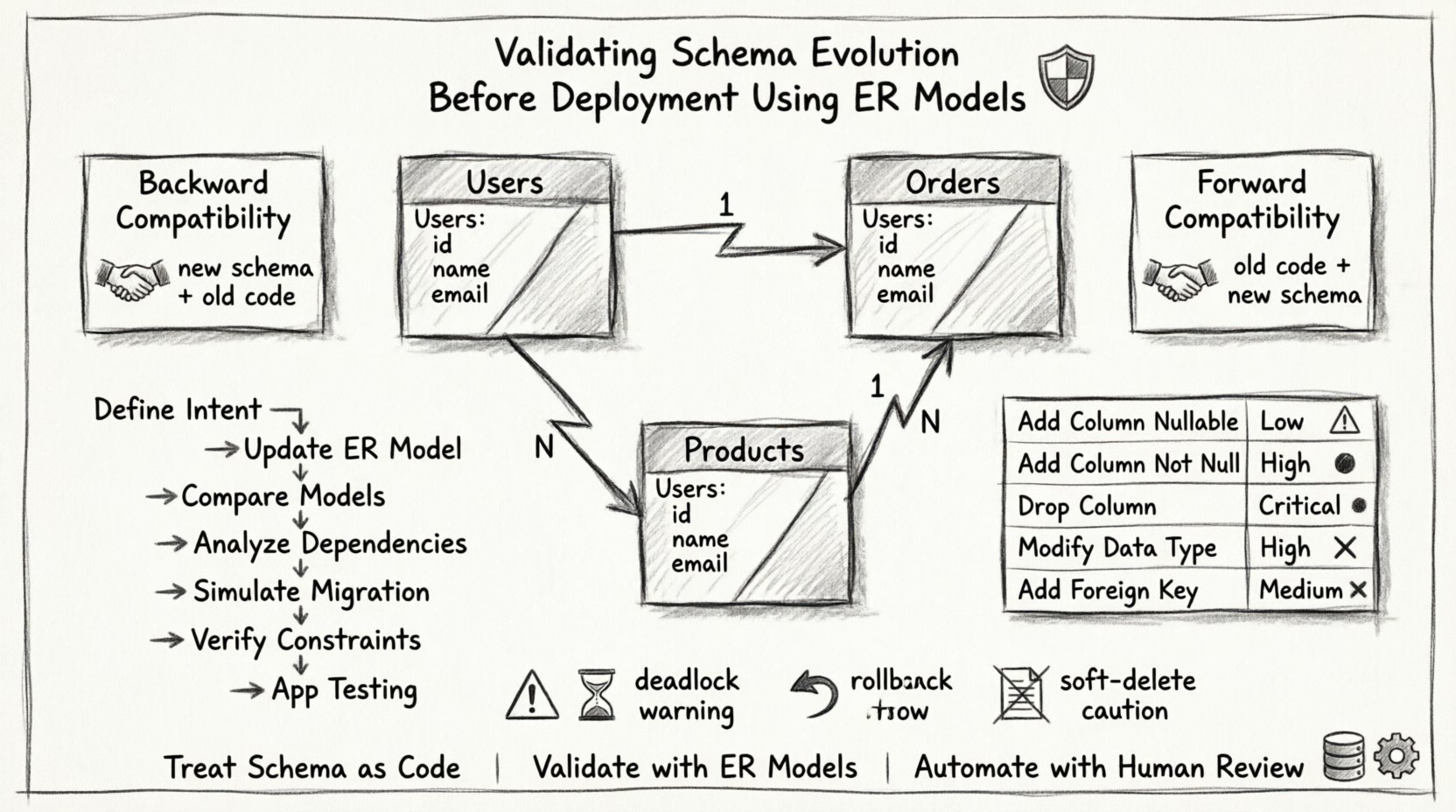

Not all schema changes are created equal. Some are safe, while others require complex migration strategies. Categorizing changes helps determine the validation depth required.

| Change Type | Risk Level | Validation Focus |

|---|---|---|

| Add Column (Nullable) | Low | Check default values and storage size. |

| Add Column (Not Null) | High | Ensure existing data satisfies the constraint or provide a default. |

| Drop Column | Critical | Verify no application code references the column. |

| Modify Data Type | High | Check for data truncation or precision loss. |

| Add Foreign Key | Medium | Ensure referential integrity is maintained across existing rows. |

Understanding these categories allows engineers to prioritize their testing efforts. Critical changes require manual review, whereas low-risk changes might be automated.

Compatibility Strategies 🔄

When deploying schema changes, maintaining compatibility with the application is crucial. There are two main strategies to consider: backward compatibility and forward compatibility.

Backward Compatibility

This ensures the new schema works with the old application code. It is essential when deploying database changes before application updates. For example, if you add a column, the old code should not crash if it ignores the new column. If you drop a column, the old code must still function or be updated simultaneously.

Forward Compatibility

This ensures the old application can still read the new schema. This is useful when the database is updated before the application. For instance, adding a column allows old queries to run without errors, even if they don’t use the new data.

A robust validation process checks both directions. The ER model helps visualize if a change breaks the contract between the application and the database. 🤝

The Validation Process Step-by-Step 🚀

Executing a schema change requires a disciplined workflow. Relying on memory or quick scripts is dangerous. Follow this structured approach to validate evolution safely.

- Define the Intent: Clearly document what needs to change and why. This prevents scope creep.

- Update the ER Model: Create the proposed state of the diagram. Do not apply changes to the physical database yet.

- Compare Models: Generate a diff between the current and proposed ER diagrams. Identify added, removed, or modified entities.

- Analyze Dependencies: Trace foreign keys and indexes. Ensure no orphaned relationships will result from the change.

- Simulate Migration: Run the migration script in a staging environment that mirrors production data volume.

- Verify Constraints: Ensure triggers, checks, and constraints are applied correctly.

- Application Testing: Run the application against the new schema to ensure queries return expected results.

Automation tools can assist in steps 3, 5, and 6, but human review remains vital for complex logic.

Data Integrity and Constraints 🛑

The most critical aspect of schema evolution is data integrity. A change that looks correct on paper might fail when applied to millions of rows. ER models help visualize constraints, but validation requires testing them against real data.

Key areas to scrutinize include:

- Primary Keys: Ensure uniqueness is not compromised.

- Foreign Keys: Check for circular dependencies that could cause deadlock.

- Check Constraints: Validate that business rules (e.g., age must be positive) hold true for existing data.

- Indexes: Confirm that new indexes do not conflict with existing ones or cause excessive write latency.

For example, changing a column from INT to VARCHAR might seem safe, but if the application expects numeric operations, errors will occur. The ER model should reflect the logical type, but the physical implementation must match.

Common Pitfalls to Avoid ⚠️

Even experienced teams make mistakes. Being aware of common pitfalls helps in creating a more resilient validation process.

- Ignoring Deadlocks: Long-running migrations can lock tables, causing application timeouts. Validate lock durations.

- Assuming Zero Downtime: Some changes inherently require downtime. Plan for it explicitly rather than hoping for the best.

- Skipping Rollback Plans: If validation passes but production fails, a rollback script is mandatory. Test the rollback as rigorously as the migration.

- Overlooking Soft Deletes: Changing logic for soft-deleted records can lead to data loss if not handled carefully.

Automating the Workflow ⚙️

While manual validation is thorough, it does not scale. Automation tools can parse ER models and generate migration scripts. They can also run linting checks to catch common errors before deployment.

Benefits of automation include:

- Consistency: Every change follows the same rules.

- Speed: Scripts run faster than manual reviews.

- Documentation: Generated reports serve as proof of validation for compliance audits.

- Integration: Automated checks can be part of the CI/CD pipeline, blocking deployments if validation fails.

However, automation should not replace human judgment. Complex business logic often requires a review by a senior engineer who understands the context of the data.

Final Thoughts on Schema Management 🌱

Schema evolution is a continuous process that requires vigilance. Treating the database schema as code is the first step toward reliability. By using ER models to validate changes, teams can maintain high availability and data accuracy.

The goal is not just to make changes, but to make them safely. A well-validated schema ensures that the application remains stable even as requirements evolve. This discipline builds trust between the development team and the infrastructure. 🏗️

Invest time in the design phase. Create clear diagrams. Document every constraint. Test every migration. These practices form the foundation of a healthy data ecosystem. When the database is stable, the application can thrive.

Remember that schema validation is not a one-time event. It is a culture. As the system grows, the validation process must grow with it. Regular reviews of the ER model ensure that the architecture remains aligned with business goals. This proactive approach prevents technical debt from accumulating over time.