Data architecture forms the backbone of any robust digital system. When an application scales, the underlying structure must evolve to handle increased load, complexity, and volume. An Entity Relationship Diagram (ERD) is more than a static map; it is a strategic blueprint that dictates how information flows, relates, and persists within a database. Designing for growth requires foresight, ensuring that the schema can accommodate future requirements without requiring a complete rebuild.

A poorly constructed model leads to bottlenecks, slow query performance, and rigid constraints that hinder development velocity. Conversely, a well-designed ERD supports flexibility, integrity, and efficiency. This guide explores the essential principles of building data models that stand the test of time and expansion.

Foundations of Entity Modeling 🏗️

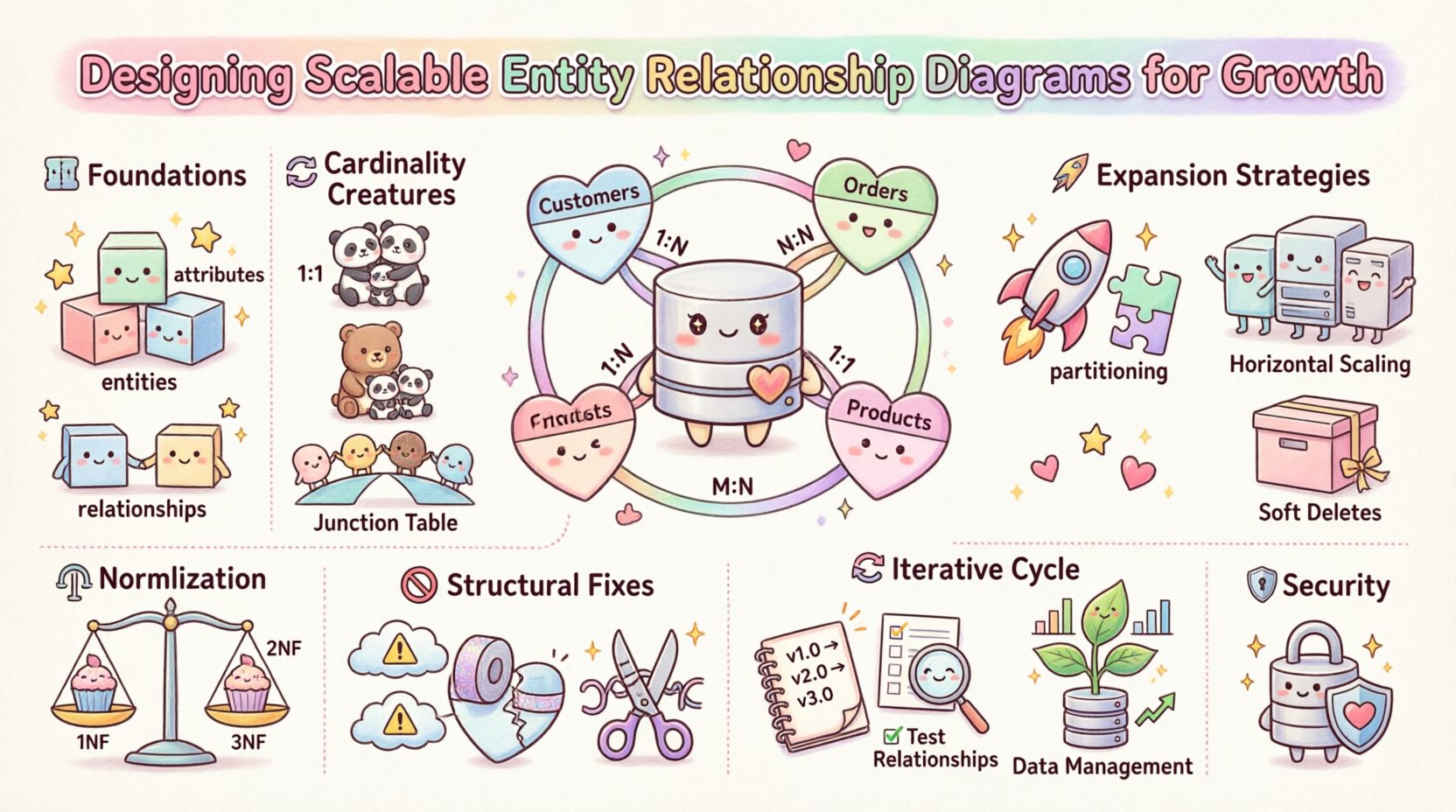

Before addressing scalability, one must understand the core components. An Entity Relationship Diagram visualizes the structure of data through three primary elements: entities, attributes, and relationships.

Entities: These represent objects or concepts within the system, such as a User, Product, or Order. In a physical database, entities translate to tables.

Attributes: These are the specific properties describing an entity, like a username, price, or creation_date. Attributes determine the granularity of data storage.

Relationships: These define how entities interact. A relationship establishes the logic connecting one entity to another, often through foreign keys.

Clarity in these definitions prevents ambiguity during development. Every field must have a distinct purpose, and every relationship must serve a logical business rule. Ambiguity in the design phase often results in costly refactoring later.

Cardinality and Multiplicity 🔄

Understanding the cardinality of relationships is critical for scalability. Cardinality defines the number of instances of one entity that can or must be associated with each instance of another entity. Misinterpreting this leads to inefficient storage and complex queries.

One-to-One (1:1): A record in Table A relates to exactly one record in Table B. This is rare in high-traffic systems but useful for separating sensitive data or optional attributes to reduce table width.

One-to-Many (1:N): A single record in Table A relates to multiple records in Table B. This is the most common relationship, such as one Customer having many Orders.

Many-to-Many (M:N): Records in Table A relate to multiple records in Table B, and vice versa. This requires a junction table to resolve into two one-to-many relationships for implementation.

As data volume grows, Many-to-Many relationships can become performance bottlenecks. The junction table must be indexed carefully to ensure that lookups do not degrade system speed. Designers should evaluate if a Many-to-Many relationship can be simplified into a One-to-Many structure by introducing an intermediary concept.

Normalization Strategies for Performance ⚖️

Normalization is the process of organizing data to reduce redundancy and improve integrity. While often viewed as a static rule, the level of normalization chosen directly impacts scalability.

First Normal Form (1NF): Ensures atomic values. Each column contains only one value, eliminating repeating groups.

Second Normal Form (2NF): Builds on 1NF by removing partial dependencies. Non-key attributes must depend on the whole primary key.

Third Normal Form (3NF): Removes transitive dependencies. Non-key attributes must depend only on the primary key, not on other non-key attributes.

While strict normalization ensures data integrity, it can introduce performance overhead due to the number of joins required. For high-volume read operations, a degree of denormalization might be necessary. This involves duplicating data to reduce the need for complex joins, trading storage space for query speed.

The decision to normalize or denormalize should be driven by the read-to-write ratio of the application. Write-heavy systems benefit from higher normalization to maintain consistency. Read-heavy systems may benefit from denormalization to minimize join operations.

Planning for Expansion 🚀

Scalability is not an afterthought; it must be integrated into the initial design. Several architectural decisions made during the ERD phase influence how the system handles growth.

Partitioning: Large tables should be designed with partitioning in mind. Columns used for partitioning (e.g., region or date) should be indexed and accessible without requiring full table scans.

Horizontal Scaling: If data is distributed across multiple nodes, the schema must support sharding keys. Avoid using global unique identifiers as the sole partition key unless the distribution is uniform.

Soft Deletes: Instead of physically removing records, mark them as inactive. This preserves historical data integrity and allows for audit trails without locking rows during deletion processes.

Additionally, consider the impact of metadata. As features expand, new attributes are added frequently. Avoid hard-coding logic in the database schema. Use flexible data types or JSON columns for attributes that may vary by entity type, provided they do not compromise query performance.

Common Structural Flaws 🚫

Even experienced designers encounter pitfalls. Identifying common structural flaws early can save significant technical debt. The following table outlines frequent issues and their implications.

Flaw | Impact on Growth | Mitigation Strategy |

|---|---|---|

Tight Coupling | Changes in one entity break others unexpectedly. | Use loose coupling via junction tables or API layers. |

Missing Indexes | Query latency increases exponentially with data volume. | Identify high-frequency query columns and index them. |

Rigid Constraints | Business logic changes require schema migrations. | Move validation logic to the application layer where possible. |

Over-Normalization | Too many joins slow down read operations. | Denormalize specific tables for read-heavy workloads. |

Unclear Relationships | Developers make incorrect assumptions about data flow. | Document cardinality and business rules clearly. |

Iterative Refinement Process 🔄

Designing a scalable ERD is rarely a one-time event. It is an iterative process that evolves alongside the product. Documentation is a critical component of this cycle.

Version Control: Treat schema changes like code. Use migration scripts to track modifications over time. This allows for rollback capabilities and historical analysis.

Review Cycles: Conduct regular reviews with stakeholders. Ensure that the data model aligns with current business goals and anticipated future needs.

Testing: Simulate growth scenarios. Load test the database with data volumes that reflect future projections. Observe how the relationships perform under stress.

Feedback loops are essential. If a specific query consistently underperforms, revisit the ERD. Sometimes, a slight adjustment to the relationship or an index strategy resolves the issue without major architectural changes.

Managing Data Growth 📈

As the system matures, the volume of data increases. The ERD must accommodate this without compromising accessibility. Archiving strategies should be considered during the design phase.

Historical Data: Identify data that is accessed less frequently. Design partitions or tables specifically for historical records to keep active tables lean.

Retention Policies: Define rules for data retention. The schema should support fields that track data age or expiration dates automatically.

Replication: Plan for read replicas. The schema should support read-only operations on secondary nodes without data integrity conflicts.

Consider the cost of storage. Storing unnecessary data drives up costs and slows down backups. Regular audits of the data model help identify orphaned tables or unused attributes that can be removed.

Security and Access Control 🔒

Security is often overlooked in ERD design. However, data relationships define access boundaries. Role-based access control (RBAC) should be reflected in the data structure.

Row Level Security: Design tables to support row-level security. This ensures that users only access data relevant to their role without complex application logic.

Audit Trails: Include fields to track who created or modified a record. This is vital for compliance and debugging issues in complex systems.

Data Classification: Tag sensitive data within the schema. This allows automated tools to enforce encryption or masking policies on specific columns.

By embedding security considerations into the diagram, you reduce the risk of data leaks and simplify compliance audits. Relationships should not expose sensitive data to unauthorized entities, even through indirect joins.

Conclusion on Sustainable Architecture 🛡️

Building a scalable Entity Relationship Diagram requires a balance between theoretical integrity and practical performance. It demands a deep understanding of how data interacts under load. By focusing on clear relationships, strategic normalization, and forward-thinking design patterns, systems can accommodate growth without friction.

Regular maintenance and documentation ensure the model remains relevant as business needs shift. Avoiding common pitfalls and prioritizing security from the start creates a foundation that supports long-term success. The goal is not just to store data, but to structure it in a way that empowers the entire organization to move forward efficiently.